Optimization is a common form of decision making, and is ubiquitous in our society. Its applications range from solving Sudoku puzzles to arranging seating in a wedding banquet. The same technology can schedule planes and their crews, coordinate the production of steel, and organize the transportation of iron ore from the mines to the ports. Good decisions in manpower and material resources management also allow corporations to improve profit by millions of dollars. Similar problems also underpin much of our daily lives and are part of determining daily delivery routes for packages, making school timetables, and delivering power to our homes. Despite their fundamental importance, all of these problems are a nightmare to solve using traditional undergraduate computer science methods. This course is intended for students who have completed Basic Modelling for Discrete Optimization. In this course you will learn much more about solving challenging discrete optimization problems by stating the problem in a state-of-the-art high level modeling language, and letting library constraint solving software do the rest. This course will focus on debugging and improving models, encapsulating parts of models in predicates, and tackling advanced scheduling and packing problems. As you master this advanced technology, you will be able to tackle problems that were inconceivable to solve previously. Watch the course promotional video here: https://www.youtube.com/watch?v=hc3cBvtrem0&t=8s

2.4.1 Square Packing

Loading...

Reviews

4.9 (133 ratings)

- 5 stars93.98%

- 4 stars6.01%

BO

Nov 15, 2019

Thank you so much! This course wasn't easy, but the material and assignments are very well thought out. Kept me motivated all the way through.

YY

Feb 20, 2018

Great course! Good presentation and lectures, challenging assignments. Learned a lot

From the lesson

Packing

In this module, you will learn the important application of packing, from the packing of squares to rectilinear shapes with and without rotation. Again, you will see how to model some of the complex constraints that arise in these applications.

Taught By

Prof. Jimmy Ho Man Lee

Professor

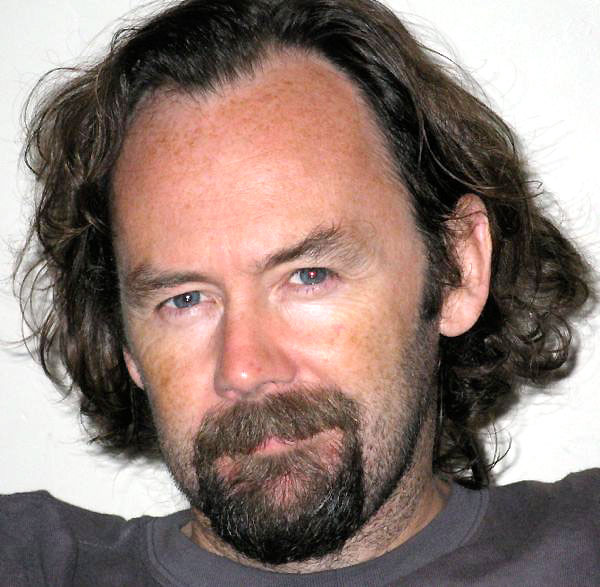

Prof. Peter James Stuckey

Professor